The Scaling with Node js

In this article, we will discuss the effective scaling practices of developing Node.js web applications. Most Developers are familiar with the Node.js implementation and we will focus on some advanced tactics to scale per the number of users.

Node.js

Node.js is a JavaScript run-time environment built on Chrome’s V8 JavaScript engine; it implements the reactor pattern, a non-blocking, event-driven I/O paradigm. Node.js is well good for building fast, scalable network applications, as it’s capable of handling a huge number of simultaneous requests with high throughput.

The way to improve the scalability

Before entering into the concept, let’s know what is Scalable? A word Scalable refers to expandable, which means the number of users will access the application from anywhere at the same time. Ensure your application is highly scalable; it can handle a large increase in users, workload or transactions without undue strain.

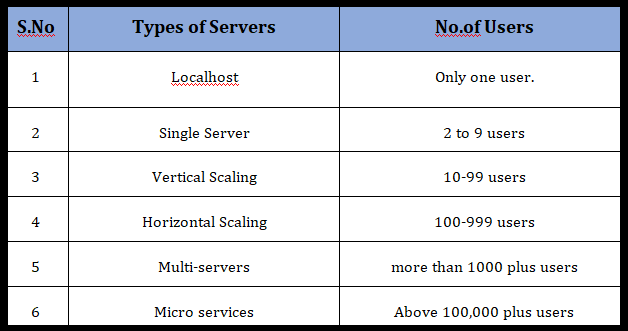

Regarding increasing the scalable, we are going to explain the options for concurrent users.

1. Localhost

Any application that runs on a developer machine for development purpose is hosting application locally. Generally, it is for the use of only one user, so there is no need to worry about scaling factor.

2. Single server

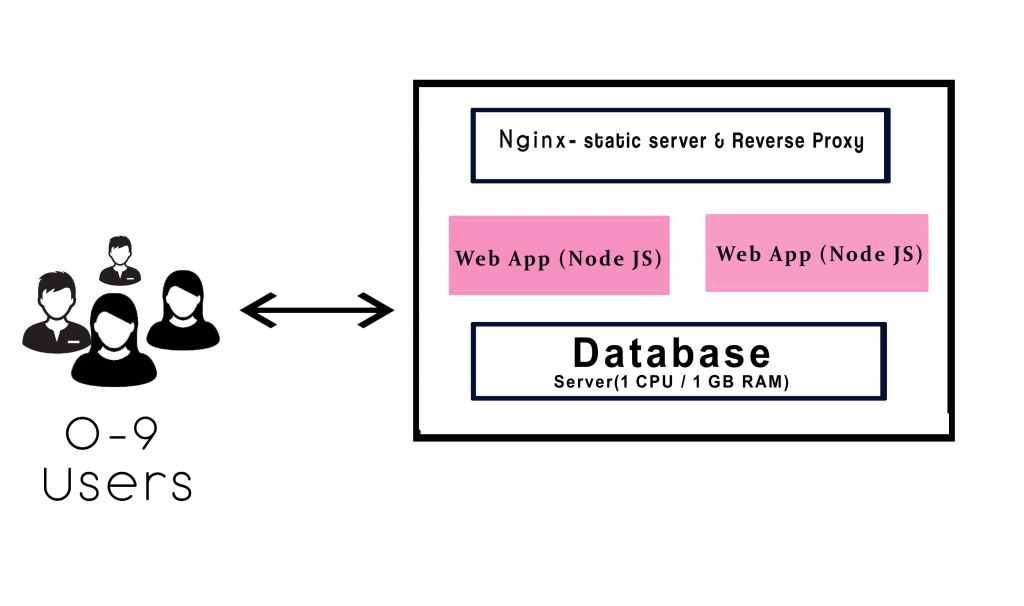

A single server system can accommodate around 1 to 9 concurrent users. Assume, your application will be used locally then the deployment will be carried out locally. It’s fine to do in a single server. For hosting the node.js application one can use Nginx as the webserver.

If you are using a single server application for few users well enough. It is simple to implement the single server and very much efficient for a few users. The requirements consist of only one CPU and 1 GB RAM, which is equivalent to web server AWS t2.micro / t2.nano.

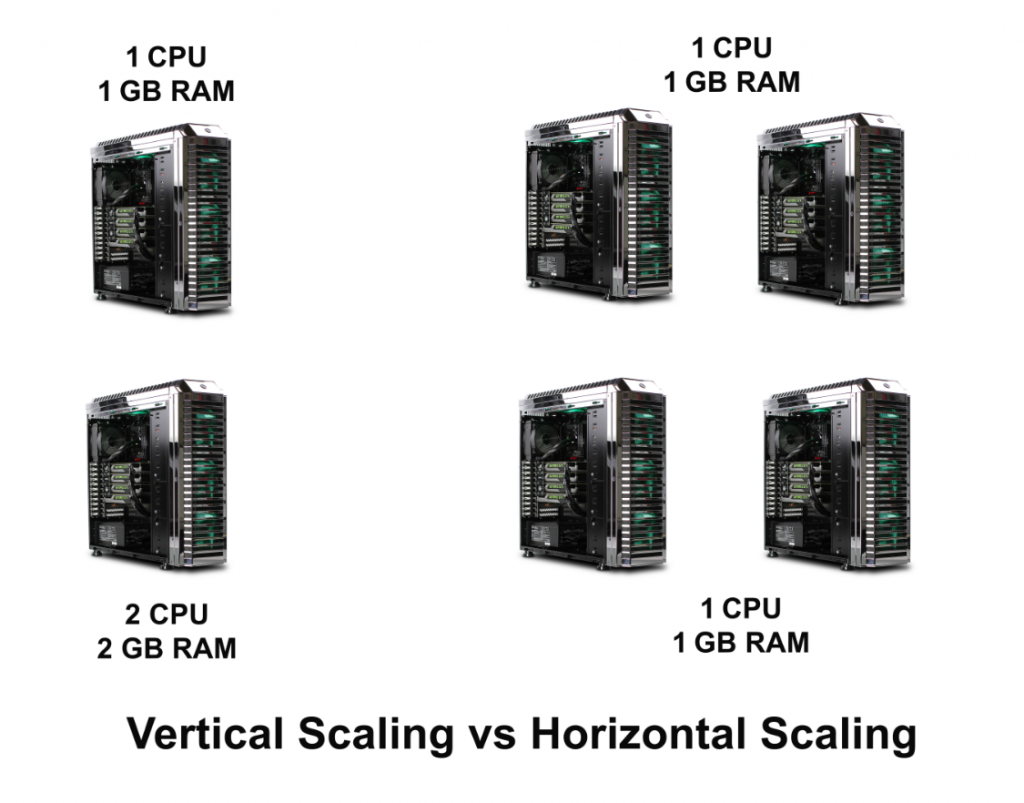

3. Vertical scaling

The term “vertical” states that to manage by adding extra capability or power to a single component. When the server begins to start slower or longer time to execute at the level we will transform to the vertical scaling. In vertical scaling the requirements consist of 4 GB RAM, which is equivalent to web service t2. medium.

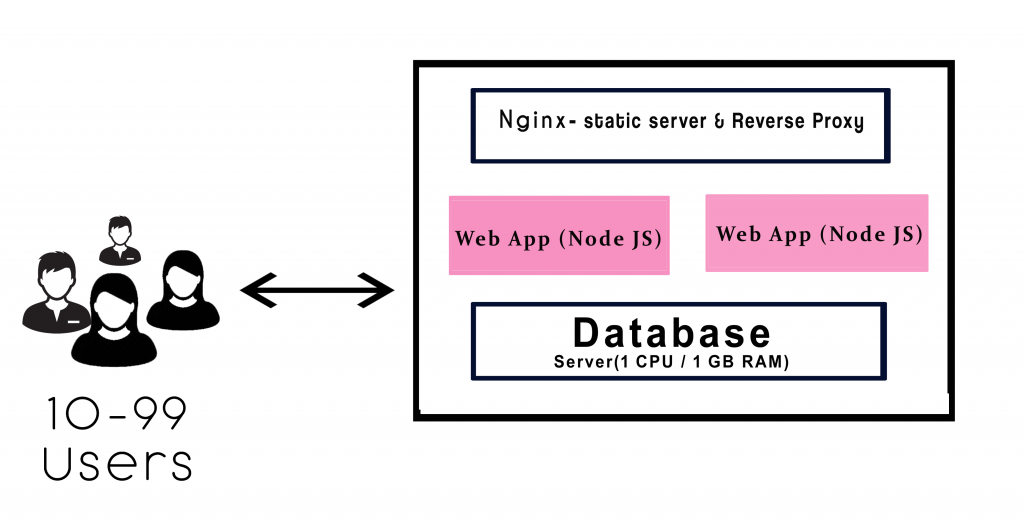

The above diagram explains the workflow in the vertical scaling.

a. It consists of two instances of Node.js which runs to deploy/update within zero downtime.

b. The function of Nginx handles the load balance.

c. If server 1 works to upgrade, then the other server keeps serving the requests. Continue this process until the buffer will get empty.

d. The purpose in Nginx takes into account of all the user requests, It consists of two functions such as a static file server and reverses proxy.

Static — The static files like CSS, JS, Images, this will not disturb the web app.

Reverse Proxy — It will access the request for the needs the application to resolve which redirects it.

4. Horizontal scaling

The Scaling horizontally, which means adding more machines to scale up your pool of resources. This concept will work out in the enterprise from the level of 100 to 1000 employees. Whenever the app responses get slowdown from the database you have to upgrade to 16 GB RAM. Cassandra, MongoDB is suitable for horizontal scaling. It provides for implementing a scale from smaller to a bigger machine.

Companies like Google, Facebook, eBay and Amazon are using horizontal scaling.

Differences

In horizontal scaling, you can add more machines to scale dynamically. If any system fails, then another system will handle the process. No worry about the process failing. It will bring high I/O concurrency, reducing the load on existing nodes and improve disk capacity.

In vertical scaling, the data resides on a single node, it gets easy to slow down when the load increases. If the system fails to process, then the whole system may get a collapse. Horizontal scaling is little bit cost-effective than the vertical scaling.

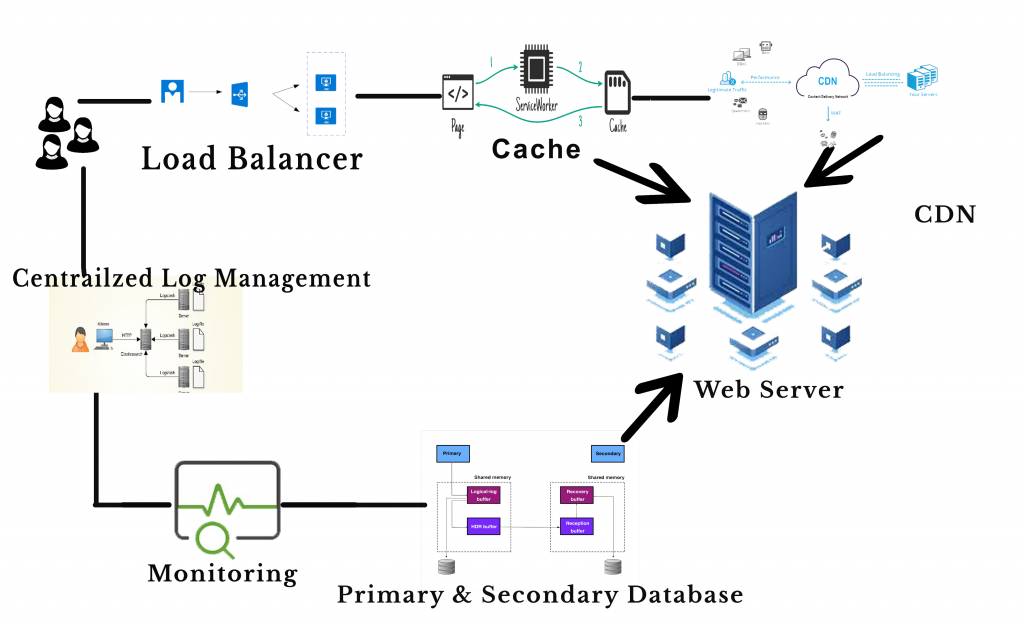

5. Multi-servers

As the business grows, it’s time to add more servers to host the node.js application. The capacity of multi-servers can accommodate above 1000’s of concurrent users. Are you like to transform the previous server to the multi-servers? If Yes, continue with the following steps

1. First, add a load balancer and app units.

2. Set up multiple availability zones (AZ) in a region which one is connected through low latency links.

3. By splitting up the static files for easier maintenance.

4. CDN is one of the servers, which is used to manage files like images, videos, CSS, JS etc.,

Amazon provides load balancing through Elastic Load Balancer (ELB). It is available for the entire availability zone. This service routes to the active host only and can able to manage 1000 instances. By this set up you can use horizontally either vertically.

6. Microservices

Most of the giant companies like Netflix, Uber, Amazon are using microservices. The goals of microservice are to separate the services like search, build, configure and other management functionalities from database instances and deploy them as a separate microservices. Each of these services will take care of their tasks and can communicate with other services via API.

Traditionally we use the monolithic database. In monolithic, we can’t extend our application for several users. All modules are composed together in a single piece. As your business growing up, you have to move on the microservices. This will take to the next level in your production.

Conclusion

Hope this article helped you with scalability issues and helped you understand a bit better of how you can use the available services perfectly.

Centizen

A Leading IT Staffing, Custom Software and SaaS Product Development company founded in 2003. We offer a wide range of scalable, innovative IT Staffing and Software Development Solutions.

Contact Us

USA: +1 (971) 420-1700

Canada: +1 (971) 420-1700

India: +91 63807-80156

Email: contact@centizen.com

Our Services

Software Development

IT Staffing

General Staffing

Remote Hiring

Products

Software Development

UI/UX Design

Product Development

Devops Services

Managed Cloud Services

Tech Stack

Contact Us

USA: +1 (971) 420-1700

Canada: +1 (971) 420-1700

India: +91 63807-80156

Email: contact@centizen.com